Infrastructure should execute intent, not reconcile it later.

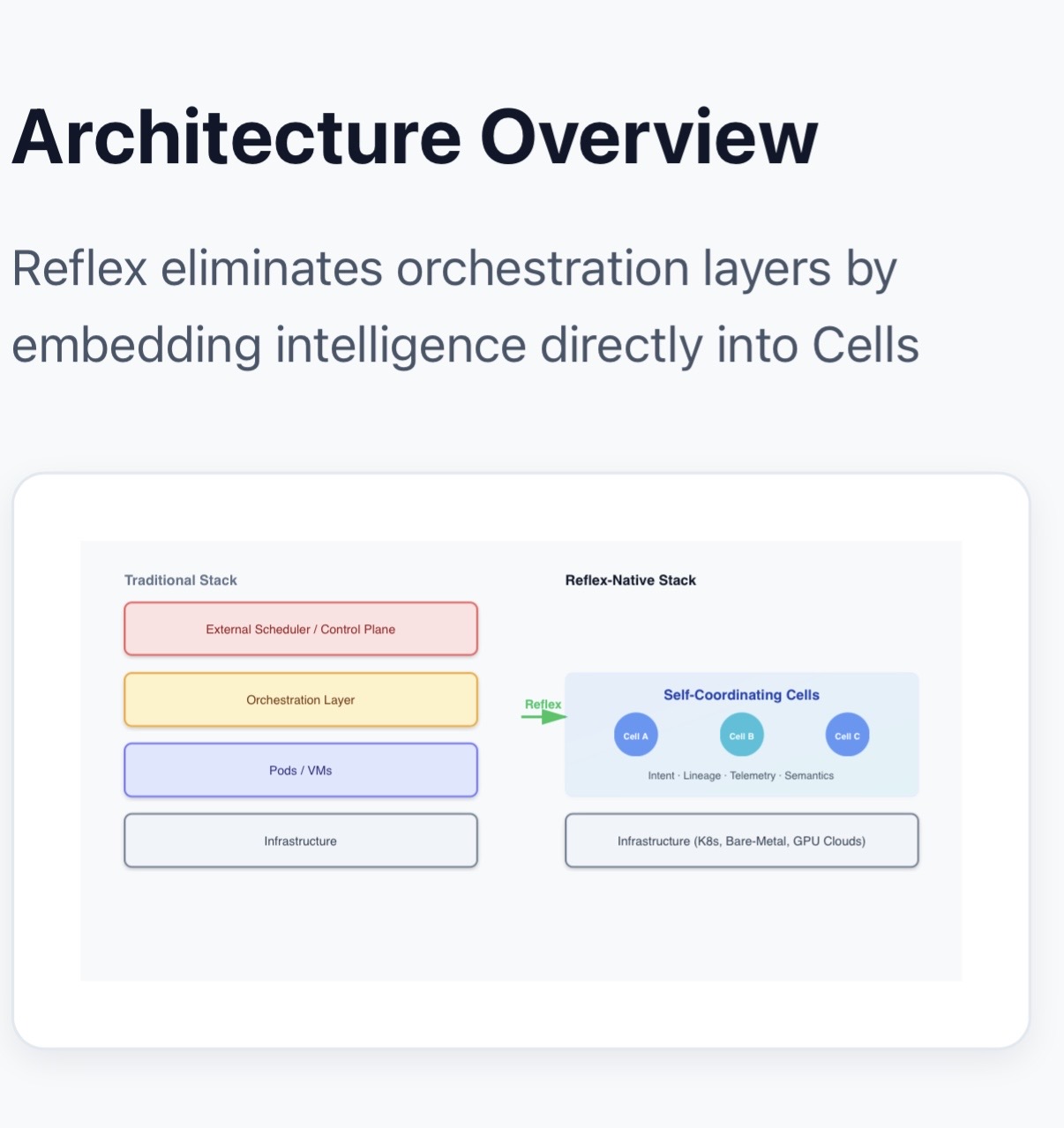

Removes orchestration, controllers, schedulers, and agents from infrastructure.

No control planes. No reconciliation loops. No infrastructure babysitting.

Celluster is a reflex native compute substrate for AI workloads, GPU fabrics, and distributed systems, removing orchestration, controllers, schedulers, and agents by moving control into execution itself.

Private technical demo completed. Enterprise Pilot conversations active. Patent pending, provisional filed. Non provisional in progress. Internship and academic collaboration tracks are now opening as execution maturity increases.

Early pilots forming • Select design partners onboarding

Nikhil Sharma

Celluster is founder led from architecture through runtime execution, with deep infrastructure experience across cloud, networking, dataplane, GPU systems, and execution layer design.

- Private runtime demo completed

- Enterprise pilot discussions active

- Federal grant under evaluation

- Internship and college / research collaboration opening

WHY CELLUSTER BECOMES INEVITABLE

Infrastructure control models are falling behind AI execution.

AI execution is no longer static.

Workloads shift across models, data paths, latency conditions, GPU topology, memory pressure, and coordination requirements in real time.

Current infrastructure still relies on orchestration, controllers, schedulers, and agents.

These systems do not execute intent.

They observe symptoms, infer behavior indirectly, and react after drift appears.

That model weakens as systems become more dynamic, more distributed, and more sensitive to execution behavior.

Celluster closes this gap by binding intent, telemetry, and reflex logic directly into execution.

Execution adapts from within instead of being corrected from outside.

This is not another infrastructure layer.

It is a shift in where control lives.

What exists today

This is no longer just a thesis.

The problem

Today’s systems infer from symptoms, then react too late.

Modern AI infrastructure still depends on orchestration, controllers, schedulers, agents, reconciliation loops, and external policy layers. These systems do not execute intent. These systems observe telemetry, infer behavior indirectly, and then try to correct drift after it emerges.

- Placement is decided upfront rather than continuously aligned with workload behavior.

- Scaling, migration, and correction are reactive events, often noisy and expensive.

- Security, policy, and coordination live outside execution, increasing control plane overhead.

- Debugging becomes ambiguous across model, data, code, infra, and resource planning.

Architecture shift

Control moves into execution. Permanently.

Celluster binds intent, telemetry, and reflex logic directly to the execution unit. Instead of an external control plane driving a workload from the outside, the workload executes inside a reflex aware substrate that can adapt continuously in place.

Compare

Reactive infrastructure vs reflex native execution

| Dimension | Current Infrastructure | Celluster |

|---|---|---|

| Control Model | Orchestration, controllers, schedulers, and agents live outside the workload | Control exists inside execution (Cell) |

| Placement | Static upfront placement from manifests, telemetry, and generalized algorithms | Adaptive placement aligned with workload behavior |

| Adaptation | Restart, requeue, migration, or delayed reactive correction | Continuous in place reflex adaptation |

| Behavior Visibility | Indirect, symptom driven, telemetry based | Direct, execution aware, behavior aligned |

| Operational Overhead | High control plane overhead, policy overhead, lifecycle overhead | Lower coordination overhead because control is inside execution |

| Upgrade / Migration | Rolling restarts, drain cycles, migration events | Intent driven continuity and reflex transition |

| Security | External proxies, sidecars, policy engines | Intent bound security inside execution semantics |

Where Celluster Fits

Celluster fits where behavior, coordination, and execution matter most.

| Layer | Examples | Celluster Role |

|---|---|---|

| GPU / AI Clusters | Lambda, CoreWeave, on prem GPU fabrics | Turns GPU islands into a reflexive execution fabric. |

| Kubernetes / Cloud Infra | AWS EKS, GKE, on prem Kubernetes | Operates inside, beneath, or alongside existing environments without depending on orchestration. |

| Network Policy Layer | Calico, Cilium style policies | Lifts policy from external control loops into intent bound execution semantics. |

| Sidecars & Service Logic | Service mesh, proxies, sidecars | Absorbs sidecar behavior into Cells, reducing overhead and control churn. |

| Data Center Design | Cisco, Arista, Equinix style environments | Models topology and behavior as a reflex graph rather than a static operations stack. |

| Edge / Real Time Systems | Automotive, robotics, trading, telco | Responds to live conditions without waiting for centralized reactive loops. |

| Private 5G / Campus | Private 5G cores, UPF, campus environments | Turns slices, users, and reachability into execution aware semantics. |

| Multi Tenant SaaS / Platforms | SaaS control planes, PaaS, B2B platforms | Makes tenant intent first class across isolation, routing, and quotas. |

| HPC / Research / Aerospace | Quantum control systems, HPC labs, edge mission systems | Enables intent bound execution under extreme locality, reliability, and security constraints. |

Pilots

Focused pilot track for serious infrastructure teams.

Celluster is not opening broadly. Pilot motion is focused on teams working through real AI workload, GPU cluster, infrastructure coordination, or execution unpredictability problems.

What pilots are for

- Validate execution behavior in a narrow, meaningful slice

- Measure coordination overhead removed

- Map workload behavior to intent driven execution

Who this fits

- Enterprise AI infrastructure teams

- GPU cloud / infra platforms

- Research and advanced computing environments

Talent and academic signal

Celluster is opening around research, internships, and curriculum interest.

As runtime maturity and pilot motion increase, Celluster is also becoming a vehicle for research exposure, advanced systems learning, and internship participation around the next wave of execution infrastructure.