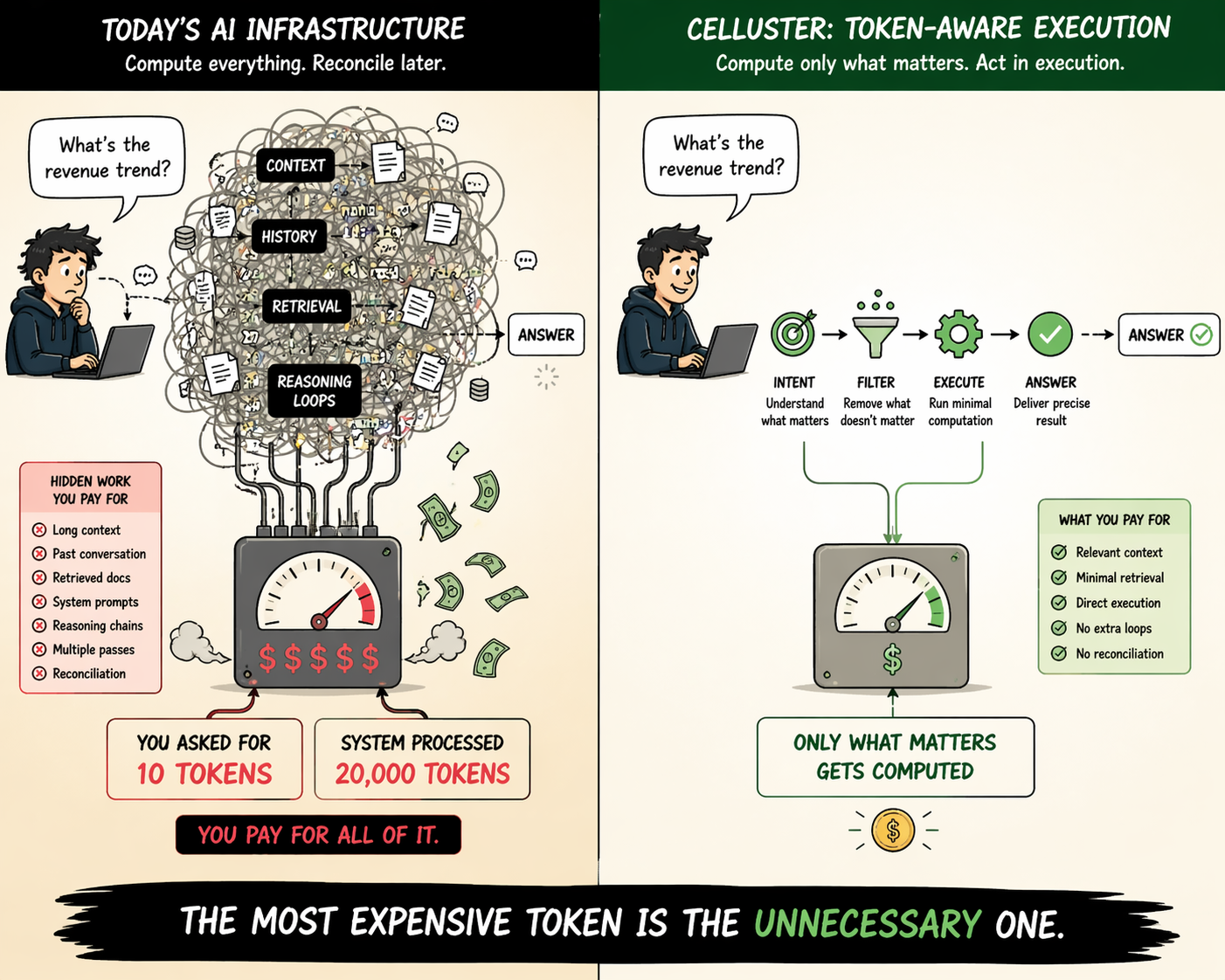

The Hidden Problem

Modern AI systems do not operate on minimal intent. They operate on expanded context:

- long conversation history

- retrieved knowledge

- system-generated reasoning chains

- cached and accumulated state

A simple query often triggers orders of magnitude more computation than necessary.

Users will pay not just for what they ask — but for everything the system decides to process.

This is an Architecture Problem

Today’s AI infrastructure is built around:

- external control loops

- post-execution reconciliation

- reactive optimization

This leads to:

- cost scaling with context, not intent

- latency driven by layered reasoning

- decision-making detached from execution

Industry Response: Optimizing the Wrong Layer

Current approaches focus on:

- GPU scheduling fairness

- model efficiency improvements

- caching and batching strategies

These optimize how efficiently compute is used.

But they do not answer: Should this computation happen at all?

Celluster: Token-Aware Execution

Celluster introduces a different model: execution-native decision making.

Instead of optimizing after computation:

- intent is defined upfront

- telemetry is observed at execution

- decisions are applied directly where computation happens

This enables:

- token-aware execution

- real-time cost control

- elimination of unnecessary computation

- alignment of infrastructure behavior with economic intent

From GPU Scheduling → To Token Scheduling

Traditional systems:

optimize GPU allocation

Celluster:

optimizes execution semantics

The problem shifts from “How do we schedule compute efficiently?” to “What should be computed at all?”

The Result

- lower cost per workload

- reduced context bloat

- faster execution paths

- infrastructure aligned with intent

A New Constraint for AI Systems

As token-based pricing becomes standard, infrastructure must become economically aware.

Not as an optimization layer — but as a core execution principle.

Celluster is built for that world.